A strange imbalance has long dominated the tech environment. Ideas are endless, presentations are even more plentiful, yet truly working AI solutions are far fewer than the headlines suggest. Companies speak about “artificial intelligence” as if it were a universal remedy for every operational issue—but precisely at the stage of a sober assessment of whether the concept is even feasible, most projects come to a halt.

This isn’t technical pessimism. On the contrary—it’s discipline. It’s the rigorous validation of assumptions that separates serious engineering teams from those who “want to add AI because everyone else does.” Every project has boundaries: the volume and quality of available data, the budget, the timeline, legal limitations, and the state of the existing infrastructure. Without a systematic evaluation of these parameters, any idea eventually turns into a drawn-out experiment.

This is where an AI Project Feasibility Checker becomes essential—not another “hype generator,” but a cold, pragmatic tool that cuts through illusions and leaves only what can truly be built. It asks uncomfortable questions that most people prefer not to articulate. And the answers often determine whether your AI project becomes a real product—or joins the long list of ambitious but unrealizable concepts.

1. Does This Even Require AI?

Before assessing architectures, datasets, cloud costs, or model accuracy, there’s a simpler and far more fundamental question: does the problem actually require artificial intelligence at all? Surprisingly often, the honest answer is “no”.

1.1 The Automation Test: When AI Is Just an Expensive Shortcut

Many ideas that arrive labeled as “AI-driven” turn out to be nothing more than routine automation wrapped in fashionable vocabulary. If the expected output is deterministic—same input, same result every time—then no statistical model is required. A rule engine, an integration, or a basic workflow solves the problem faster, cheaper, and with fewer surprises.

This is not a downgrade. It’s a sign of engineering maturity. Well-designed automation often brings more immediate ROI than any machine learning experiment, especially in environments where data is incomplete, inconsistent, or simply unavailable.

1.2 The “AI Fairy Dust” Problem

Some projects inherit AI the way PowerPoint inherits transitions—because someone, somewhere, decided it should look more innovative. Executives ask for AI because competitors mention it, because investors expect it, or because internal presentations sound safer when they include the word “intelligence”.

But assigning AI to a task that does not need prediction, classification, or pattern recognition creates unnecessary technical debt. It also leads to inflated expectations: people assume the system will “learn”, “adapt”, or “understand”, when in reality the underlying logic could have been expressed in a few rules.

The result is predictable: disappointment, delays, budget overruns, and the quiet burial of the project months later.

1.3 The Smallest Useful Version Without AI

A proper feasibility check always starts with an uncomfortable, pragmatic exercise: what is the smallest functional version of the product if we remove all AI components?

This minimal version—the dry, unglamorous skeleton—reveals whether the AI part is truly adding value or simply decorating the pitch deck. In some cases, AI becomes a natural extension: improving efficiency, reducing manual operations, or scaling a process beyond human capacity. In others, the non-AI core already solves 80% of the problem, and machine learning becomes optional rather than foundational.

Understanding this difference early prevents months of unnecessary engineering work and clarifies whether the idea should move to the next phase of evaluation.

2. Do You Have the Data for This?

Once an idea passes the basic relevance test—meaning AI is genuinely needed—the next barrier is almost always data. Not the theoretical “we have data somewhere” type, but the real, structured, accessible, legally usable datasets that machine learning actually requires.

Most AI projects fail here, quietly and early.

2.1 Quantity, Quality, and Access: The Unromantic Reality

Every AI model, whether as simple as a classifier or as complex as an agentic system, relies on one thing: clean, labeled, representative data. And here the conversation typically collapses.

Teams proudly mention “millions of records”, but when the actual export is opened, it turns out to be:

- duplicates

- inconsistent entries

- missing critical fields

- data scattered across multiple systems

- fields that never meant what people assumed they meant

- logs missing timestamps, context, or user IDs

Quantity is rarely the real constraint. Quality almost always is.

And even when quality exists, access often does not. Corporate legal, compliance, or infrastructure limitations can stretch a project by months—or stop it entirely.

2.2 Data Ownership: Who Does It Actually Belong To?

Another uncomfortable but necessary stage: confirming that you can legally use the data for model training.

Ownership is frequently assumed rather than verified:

- Data stored by third-party platforms that prohibit model training

- User-generated data with strict consent requirements

- Personal or behavioral data requiring anonymization

- Datasets tied to expired contracts

- Information shared internally but not officially licensed

In some sectors—finance, healthcare, logistics—these questions define not just feasibility but liability. If ownership or permissions are unclear, the model cannot be trained. No workaround exists.

2.3 The Cold Start Problem: When Data Simply Doesn’t Exist

Many promising ideas reach a dead end because the company has no historical data, or has the wrong type of data, or has too little of it to train a reliable model.

At this point, organizations usually consider three painful alternatives:

- Collect data manually. Slow, expensive, but sometimes the only viable path.

- Pay for annotation or labeling. A hidden cost that can exceed development budgets.

- Use synthetic or bootstrapped datasets. A temporary patch—helpful for prototyping, but not a substitute for real production data.

Every serious feasibility check includes one blunt conclusion: if you don’t have the right data, you don’t have an AI project yet.

Some ideas recover through careful data strategy. Others require redesigning the concept entirely.

3. Can You Build This With Your Budget and Timeline?

Even when the idea makes sense and the data exists, an AI project can still fail on the most pragmatic parameter—resources. AI development is not a linear process. Models need iteration, infrastructure needs tuning, and unforeseen costs appear exactly where no one expected them.

A feasibility assessment must clarify whether the organization can realistically sustain the effort.

3.1 Budget Breakdown: The Part Nobody Likes Discussing

Most teams underestimate AI costs because they focus on the visible part—development hours. In reality, the budget is split across multiple categories, each with its own risks:

- Data work. Cleaning, labeling, annotation, enrichment, validation.

- Engineering. ML engineers, data scientists, backend, MLOps.

- Infrastructure. GPUs, vector databases, storage, logging, monitoring.

- Experiments. Multiple model versions, parameter tuning, failed attempts.

- Compliance & security. Especially in regulated industries.

- Long-term maintenance. Model retraining, drift detection, pipeline monitoring.

- An MVP can be built for a reasonable cost. A production-grade AI system is an ongoing financial commitment.

This difference is often misunderstood—or not discussed at all.

3.2 Timeline Reality Check: How Long It Actually Takes

The second major misconception concerns time. Even a well-structured AI project rarely moves smoothly from idea to deployment.

Typical timelines:

- 2–4 months — prototype or MVP

- 6–9 months — stable production version

- 12–18 months — fully integrated system with data pipelines, monitoring, retraining cycles

And this is assuming the data is ready, the tech stack is modern, and decision-making is fast.

Delays appear when:

- data is harder to extract than expected

- integrations require rewriting legacy modules

- accuracy targets were unrealistic

- business users change requirements mid-project

- compliance slows approval cycles

A feasibility check protects a company from making promises its own infrastructure cannot support.

3.3 Hidden Costs: The Expenses That Outlive the Project

AI projects generate “aftercare costs” that many organizations overlook during planning. These costs begin the day the model goes live:

- Model drift — the system becomes less accurate over time.

- Retraining cycles — new data must be incorporated regularly.

- Monitoring & evaluation — performance must be tracked in production.

- Cloud costs — can grow unpredictably with user activity.

- Support & troubleshooting — especially when a model becomes mission-critical.

This is why feasibility is not only about building the system, but also about owning it.

Some organizations discover they can afford development but not maintenance—and the project quietly fades out after the first pilot.

4. Technical Feasibility: What Kind of Model Can You Actually Build?

Once the idea, data, budget, and timeline pass the initial filter, the next step is purely technical: is the system you imagine even possible with today’s models and your infrastructure? A surprising number of projects stall here because the desired outcome simply exceeds what current ML techniques or available architecture can deliver.

Technical feasibility is not about optimism—it is about physics, complexity, and computing limits.

4.1 Choosing the Right Type of Model: What the Idea Really Needs

Not every concept requires a large language model, and not every workflow benefits from a neural network. A proper feasibility check identifies the minimal architecture capable of solving the problem.

Common categories include:

- Classification models for detecting categories, fraud, defects, anomalies.

- Forecasting models for demand, inventory, pricing, risk.

- Recommendation engines for content, products, logistics routing.

- RAG systems for document-heavy domains like insurance, HR, legal.

- Agentic pipelines for multi-step automation with decision-making.

- Simple heuristics mixed with ML often more stable and cost-effective than a pure neural approach.

The goal is not to choose the most fashionable option, but the most reliable one. And reliability usually comes from simplicity.

4.2 Architecture Complexity: Where Feasibility Breaks Down

Many AI ideas sound reasonable until you map the architecture. Then it becomes clear the system needs:

- multiple data pipelines

- real-time inference

- streaming data

- vector databases

- GPU scaling

- low-latency APIs

- simultaneous model orchestration

Each additional component increases the probability of failure. A seemingly “simple” AI assistant can, in reality, require a dozen services running in harmony—something only a few organizations can maintain.

Sometimes, the issue is not technological impossibility but excessive operational burden. An AI system may be buildable but not survivable within the client’s infrastructure.

4.3 Integration-Level Risks: The Hidden Barrier Nobody Mentions

Even a strong model is useless if it cannot integrate into the organization’s ecosystem. This part of feasibility is often underestimated.

Main risks include:

- Legacy systems

Old CRMs, ERPs, and databases that cannot handle modern APIs. - Security restrictions

No ability to send data externally — no cloud inference. - Latency constraints

A model that needs 2 seconds per prediction cannot support real-time workflows. - Scalability limitations

Systems that collapse as traffic grows. - Unclear ownership of internal APIs

Integration paths that exist “on paper only”.

This is why feasibility checks often end with a practical recommendation: simplify the ambition or upgrade the infrastructure—otherwise, the system cannot work in production.

Technical feasibility is never about “can this be built in theory?” It’s about “can this be built here, with this infrastructure, maintained by this team?”

5. Team Feasibility: Who Will Actually Build and Maintain the System?

Even a well-designed AI concept with solid data and a reasonable budget can fail if the team behind it is unprepared. AI is not just another software feature. It requires mathematical expertise, understanding of statistical behavior, and the ability to reason about uncertainty—skills that generic developers or outsourced staff rarely possess.

A feasibility check must evaluate whether the organization actually has the capability to deliver what it imagines.

5.1 Real AI Engineers vs. Generic Developers

The most common misunderstanding: assuming any developer who “knows Python” can build a machine learning system. This assumption destroys more AI projects than budget or data problems combined.

A real AI/ML engineer has:

- a strong mathematical background

- knowledge of probability, optimization, and linear algebra

- experience evaluating model performance

- understanding of overfitting, drift, bias, variance

- the ability to interpret model errors, not just run code

- familiarity with MLOps, orchestration, and deployment pipelines

Generic developers, by contrast, may know how to implement a function but not how to design a statistical system that behaves reliably in the real world.

5.2 Red Flags: Signs a Company or Team Isn’t Prepared

A feasibility check also exposes organizational warning signs:

- “AI team” composed entirely of frontend/back-end developers

- No full-time ML specialists in leadership roles

- Heavy reliance on freelancers or staff-resellers

- LLM wrappers sold as “AI systems”

- No ability to explain model failures

- Absence of MLOps or data engineering capacity

These patterns usually indicate not only risk, but near-inevitable failure once the project moves beyond prototyping.

5.3 What a Proper AI Team Looks Like

A successful AI initiative requires a balanced, interdisciplinary structure:

- Data Scientist. Designs models, analyzes data, sets accuracy benchmarks.

- ML Engineer. Implements, trains, tunes, evaluates, and deploys the model.

- Data Engineer. Builds pipelines, integrates sources, ensures data quality.

- MLOps Specialist. Handles infrastructure, monitoring, retraining, automation.

- Domain Expert. Interprets results in business context.

- Product/Project Lead with AI Competence. Keeps expectations realistic and ensures technical decisions align with business needs.

This is not bureaucracy—this is survival. Without the right team composition, AI systems degrade, fail silently, or create outcomes nobody understands or can control.

A feasibility assessment must verify not only who will build, but who will maintain, own, and evolve the model after launch. Otherwise, the project is not viable, no matter how promising the idea appears on paper.

See also: Math First, Buzzwords Later: Evaluating a Software Company’s True AI Competence.

6. Pre-MVP Risk Map: Understanding What Can Go Wrong Early

Before a single prototype is built, an AI initiative already carries a set of predictable risks. The purpose of a feasibility assessment is not to eliminate these risks—that is impossible—but to identify them clearly and decide whether the organization can tolerate them.

A project that looks promising on slides may collapse the moment these risks are mapped onto reality.

6.1 Data Risks

Data is the foundation of every AI model, yet it is often the least stable part of the project. Common risks include:

- Insufficient training data

- Poor labeling or mislabeled samples

- Bias from incomplete datasets

- Changes in data structure over time

- Inability to access locked or third-party data

If data risk is high, model performance becomes unpredictable — and fixing it usually requires months, not days.

6.2 Technical Risks

Not all ideas are technically feasible within the client’s current ecosystem. High technical risks include:

- Real-time requirements the infrastructure cannot support

- Dependency on GPU scaling not available internally

- Integration with legacy systems

- Inaccurate assumptions about model accuracy

- Unclear evaluation metrics

Technical risk determines whether the project is even buildable, not just desirable.

6.3 Product Risks

Even a well-designed AI model can fail when interacting with real users. Product-level risks include:

- Unclear problem definition

- Unrealistic expectations of “intelligence”

- Business processes that are not ready to adapt

- User distrust of automated decisions

- Low tolerance for model mistakes

A model with 90% accuracy may look strong in theory and unusable in practice if the use case requires 99.5%.

6.4 Market & ROI Risks

A feasibility check must also answer a blunt question: will this system pay for itself?

Risks include:

- Unclear monetization

- Benefits that are hard to quantify

- Solutions that reduce cost but don’t create revenue

- Projects that depend on hypothetical future datasets

- Unclear owner responsible for commercial outcomes

Many AI ideas fail because the ROI horizon is measured in years while stakeholders expect impact within months.

6.5 Operational Risks

AI systems do not end at deployment. They require maintenance, retraining, monitoring, and continuous adjustment.

High operational risks include:

- No internal MLOps capability

- No assigned owner for model performance

- Unclear retraining policy

- Lack of monitoring tools

- Dependency on a single specialist or external provider

If no one is responsible for the model after launch, the risk of silent degradation is almost guaranteed.

Risk Summary: A Simple Feasibility Signal

A pre-MVP risk map is typically represented as a three-color matrix:

| Risk Type | Level | Implication |

|---|---|---|

| Data | 🔴 High / 🟡 Medium / 🟢 Low | Determines accuracy ceiling |

| Technical | 🔴 / 🟡 / 🟢 | Determines buildability |

| Product | 🔴 / 🟡 / 🟢 | Determines usability |

| Market & ROI | 🔴 / 🟡 / 🟢 | Determines funding stability |

| Operational | 🔴 / 🟡 / 🟢 | Determines long-term survival |

If more than two categories are red, the project should not proceed. A feasibility check exists to reveal this early — before months of development sink into a dead end.

7. Build vs. Buy vs. Hybrid

Even if an AI project is technically feasible, the next strategic decision is whether the organization should build the system from scratch, buy an existing solution, or use a hybrid approach that blends external models with internal logic.

This decision has more impact on cost, reliability, and delivery time than any algorithmic choice. A feasibility assessment must determine which path creates the least risk with the highest long-term stability.

7.1 When Buying Makes More Sense Than Building

There are use cases where developing a custom AI model is unnecessary and economically irrational. Buying an existing solution is often preferable when:

- The problem is standardized (OCR, transcription, sentiment analysis)

- Accuracy requirements are moderate

- Integration time is more important than customization

- Internal teams lack long-term AI maintenance capacity

- Vendor lock-in is acceptable

In such cases, off-the-shelf AI provides predictable performance, immediate deployment, and lower operational risk.

7.2 When You Need a Fully Custom Model

A custom-built solution becomes necessary when the organization’s needs cannot be met by generic tools. You should build your own model if:

- The domain is highly specialized (claims automation, pricing, logistics)

- Accuracy targets exceed what commercial APIs can deliver

- Your data is proprietary and cannot leave internal infrastructure

- The system must adapt to domain-specific rules and behaviors

- There are strict regulatory or privacy requirements

In these cases, custom models are not a luxury—they are the only path to strategic differentiation.

7.3 The Hybrid Architecture: Often the Most Realistic Option

Many of today’s successful systems are neither fully custom nor fully bought—they are hybrids. These architectures combine:

- Large language models (LLMs) for reasoning and text processing

- Custom ML models for domain-specific predictions

- Rule engines for deterministic logic

- RAG pipelines for document-heavy workflows

A hybrid approach offers the best balance between flexibility, cost, and performance. It avoids the limitations of pure off-the-shelf solutions while reducing the development burden of custom AI.

7.4 Decision Criteria: How to Choose the Right Path

A feasibility assessment evaluates the optimal direction based on five critical factors:

- Accuracy requirements. How perfect must the output be?

- Data sensitivity. Can data be shared with external APIs?

- Infrastructure. Does the organization support AI workloads?

- Timeline. How fast must the system be delivered?

- Budget. Can the organization afford long-term AI ownership?

In most cases, the final recommendation is not ideological but practical: the right architecture is the one you can maintain without crisis.

8. Outputs of the Feasibility Check

A proper feasibility assessment does not end with a vague recommendation like “proceed” or “delay”. It produces a set of concrete, structured outputs that give stakeholders a realistic picture of what can be built, how, and at what cost. These outputs become the foundation for planning, budgeting, and aligning expectations.

The goal is simple: remove uncertainty before any development begins.

8.1 Go / No-Go Decision

The first and most important outcome is a clear, unambiguous verdict:

- Go — the project is feasible under current conditions.

- No-Go — the idea is not viable without major changes.

- Conditional Go — feasible only if specific requirements are met (data collection, infrastructure upgrades, staffing).

This decision alone saves organizations months of uncertainty and preventable expense.

8.2 Minimal Viable Dataset (MVD)

The feasibility check defines the smallest dataset required to train a useful model — not a perfect one, but one good enough for an MVP. The MVD typically includes:

- Data types and sources

- Required fields and labeling rules

- Minimum record counts per class or scenario

- Quality standards (completeness, consistency, noise tolerance)

This is crucial because many organizations discover only at this point that they lack the necessary historical data.

8.3 Technical Architecture Draft

A feasibility assessment also outputs a high-level architecture for the proposed AI system, including:

- Model type (classification, forecasting, RAG, agentic pipeline)

- Data pipeline structure

- Storage and vector indexing requirements

- Inference pathways and latency tolerance

- Integrations with internal and external systems

This ensures stakeholders understand the technical implications before committing to a development plan.

8.4 Budget & Timeline Estimate

The feasibility check includes a realistic, evidence-based estimate of:

- Development costs for MVP and for the full product

- Ongoing operational and cloud costs

- Expected annotation or labeling expenses

- Projected delivery milestones

These projections are not optimistic — they are anchored in actual constraints, which is why they prevent future overruns.

8.5 Immediate Next Steps

Finally, the assessment defines a specific sequence of actionable next steps. Examples include:

- Begin structured data collection and cleaning

- Run a small controlled experiment to validate assumptions

- Upgrade key integration points or legacy modules

- Prepare labeling instructions and annotation workflow

- Build a non-AI prototype to test the user journey

These steps help transform the abstract idea into a concrete project roadmap, making the transition from planning to execution smooth and predictable.

Together, these outputs form the blueprint for all subsequent design and development efforts. Without them, AI projects operate on assumptions — the primary cause of failure.

9. A Simple Self-Test: 10 Questions That Predict Whether Your AI Project Will Succeed

Even without a full consulting engagement, any organization can perform a quick, honest self-test to understand whether an AI project has a real chance of success.

These ten questions expose the core assumptions behind the idea — and reveal weak spots early, when they are still fixable.

If more than three answers are uncertain or negative, the project is not yet ready for development.

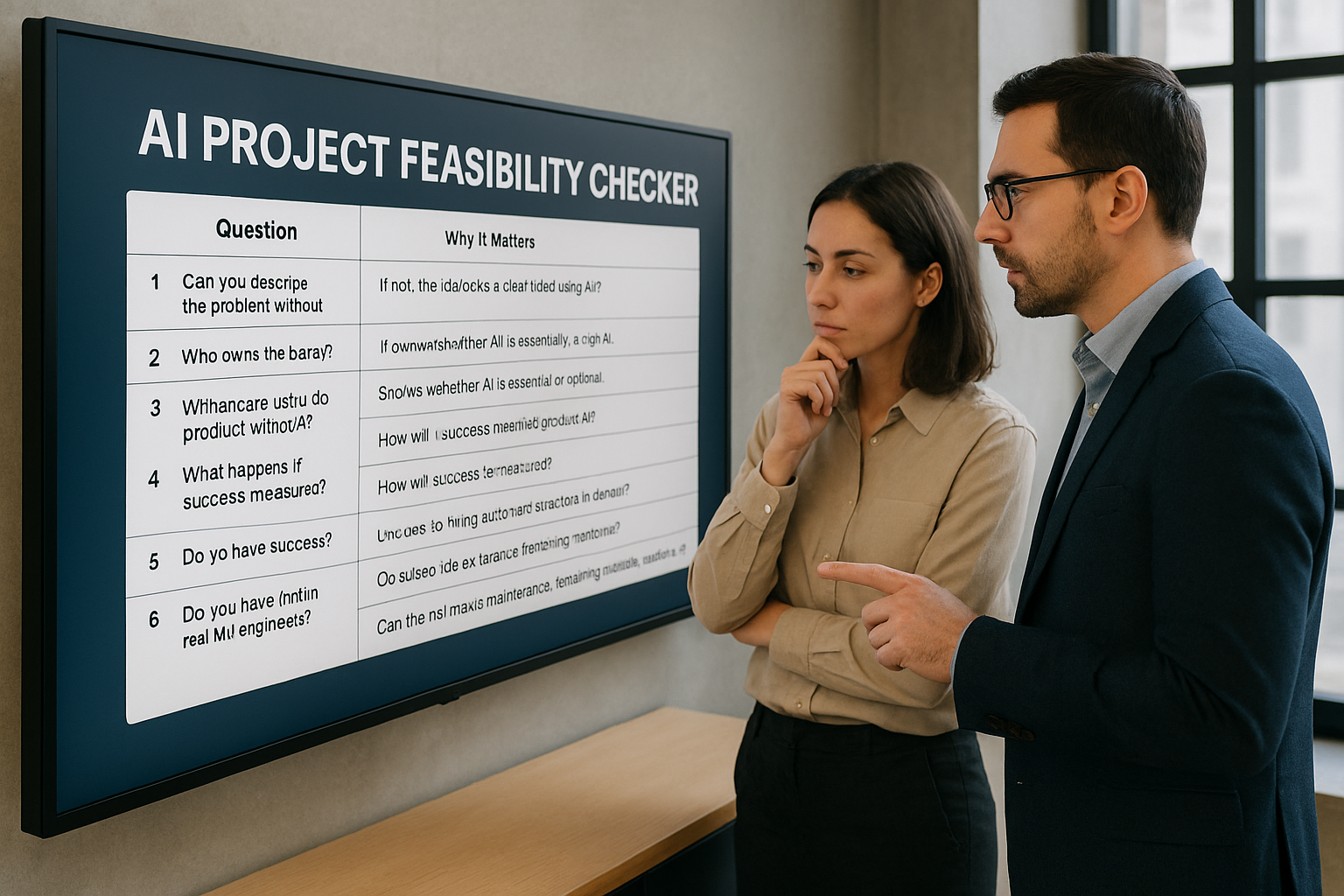

9.1 The 10-Question Feasibility Checklist

| # | Question | Why It Matters |

|---|---|---|

| 1 | Can you describe the problem without using the word “AI”? | If not, the idea lacks a clear functional definition. |

| 2 | Who owns the data you plan to train on? | If ownership is unclear, the project cannot legally proceed. |

| 3 | Is the outcome deterministic or probabilistic? | Deterministic tasks rarely require machine learning. |

| 4 | What is the smallest useful version of the product without AI? | This reveals whether AI is essential or optional. |

| 5 | What happens if the AI component is removed entirely? | Shows whether the system is overly dependent on uncertain technology. |

| 6 | Do you have enough data to train and validate a model? | “We have something in the database” is not enough for ML readiness. |

| 7 | How will success be measured? | Unclear metrics make failure inevitable. |

| 8 | Do users trust automated decisions in this domain? | In sensitive industries, small errors create major risks. |

| 9 | Do you have (or plan to hire) real ML engineers? | The team determines whether the model can survive long-term. |

| 10 | Can the organization afford ongoing maintenance, retraining, and monitoring? | AI requires recurring investment; it’s not a one-time expense. |

9.2 A Practical Use Case for the Checklist

This self-test is particularly useful before preparing budgets, presenting to stakeholders, or engaging vendors. It forces clarity and removes illusions early. Measuring feasibility at the idea stage prevents wasted effort and aligns expectations with reality.

The strongest AI projects are not those with the most ambitious ideas—but those with clear assumptions, realistic boundaries, and well-defined metrics for success.

10. AI Should Be a Cost Saver—Not a Cost Generator

By the time you complete a proper feasibility check, the original idea—bold, polished, full of promise—inevitably changes shape. It becomes calmer, more grounded, and far more practical.

You stop thinking about AI as a magic layer you simply “add,” and start seeing it as an engineering decision: what data it needs, how stable the model will be, who maintains it, and what it will cost once it goes live.

This shift is healthy. It separates wishful thinking from workable strategy. Many AI projects fail not because they lack ambition, but because no one stopped early enough to test the idea

against real-world constraints. A feasibility check exposes these constraints before the first dollar is spent on development—saving months of time, eliminating blind spots, and preventing beautifully designed prototypes from collapsing under production pressure.

The best part is that you don’t need a full AI department to start this process. The questions and frameworks in this article are clear enough for any product owner, CTO, or founder to perform an internal reality check: define the problem without hype, inspect the data you truly have, examine timelines and budget, and map the risks that could break the project long before launch. If the answers hold up—you’re already in a stronger position than most teams that rush forward without looking.

And if at any point the picture becomes unclear or technically ambiguous, this is exactly where asking for help makes sense. LaSoft’s AI/ML specialists support companies that need an honest, structured assessment—what can be built right now, what requires preparation, and what should be postponed or redesigned entirely. Sometimes the most valuable outcome is not a green light, but a clear understanding of what must change.

Whether you run the feasibility check yourself or involve an experienced team, the goal remains the same: ensure that your AI initiative is viable, maintainable, and worth the investment. AI succeeds not because it sounds impressive, but because it functions realistically, predictably, and in alignment with the constraints of your business.